3D printed firearmsare a growing problem.

In 2026, laws have been passed in Colorado, California, and New York to restrict this, but little to no software exists to enforce it.

- Since 2017, ghost gun recoveries have surged 1,600%, with over 92,000 seized by law enforcement through 2023.

- As of now, 3D printers have no guardrails. Virtually no software can detect or block prohibited blueprints.

Context

A ghost gun is an untraceable firearm, often 3D-printed at home, that requires no background check and carries no serial number. Over 92,000 have been seized since 2017, with 1,700 tied to homicides. The printer software that produces them has zero awareness of what it builds.

1, Relevant information

The blind spot

Meshes become G-code with no semantic read on the part, no built-in detection or compliance at the boundary. Blind translation is increasingly a legal and safety problem.

Ghost guns

Unserialized parts and firearms from desktop printing bypass ordinary checks. Today's slicers cannot tell benign geometry from regulated hardware.

2026 regulations

States are moving: Colorado, California (printer blocks by 2029), New York-style proposals. Penalties reach $25,000 per violation. Policy only bites if the check sits where mesh becomes G-code, inside or against the slicer path.

2, Where things stand

- Few real integrations stop malicious G-code at the source; mesh-to-toolpath remains largely ungoverned.

- This project aims to add friction: make unregistered firearm prints harder, not invisible.

Key terms

- Slicer3D model to print instructions; the usual last software stop before the machine.

- G-codeLow-level moves and extrusion the printer executes.

- MeshTriangle soup (STL/OBJ/GLB) the slicer ingests before G-code.

The project

Markey*

The frontier firearm parts identification and restriction classifier for slicer softwares.

Markey classifies printable geometry at the mesh stage and surfaces a policy signal, confidence, alternates, and explainability, so high-risk jobs can be held or blocked before G-code is committed.

01

Renders + classify

Consistent renders from the mesh feed a single classification pass, repeatable, auditable inputs.

02

Where it plugs in

Hooks at export, queue, or pre-print, anywhere a mesh exists but G-code is not yet final.

03

Dashboard

Operators see verdict, uncertainty, and which evidence drove it, enough to intervene without digging through logs.

* Named for Senator Ed Markey, who has pushed legislation on 3D-printed guns for years.

How it works

Markey is a fine-tuned model built on Qwen3 0.6B embeddings. It was trained with a hybrid approach: 29-dimensional G-code feature extraction and a projection layer on text embeddings. In this setup, feature extraction does most of the heavy lifting, while the text pathway serves as a supplementary classifier.

G-code is like assembly: it is human-readable, but not in a form that makes it easy to reason about what the printed part will look like. That plays to the tokenizer's strengths, tokens like G0 already land as natural string units, so we get reasonable results from Qwen's tokenizer without building a custom one from scratch.

Mesh in

Standard formats: STL, OBJ, GLB. The slicer UI is unchanged; this runs on the file.

Views + model

CuraEngine slices the files into G-Code, then passed to our model.

Dashboard

Label, confidence, alternate guesses, and which views mattered. Review or stop before print instructions go out.

G-code

Mesh-only checks don't catch everything, for example geometry hidden inside a shell can show up differently in toolpaths. This prototype is about the mesh and the handoff before print; G-code represents the paths the printer will actually execute.

Between the slicer and the printer

The aim is the gap between slicing and the printer: after you have a part file, before motors run. The interesting part is the file and when it meets hardware.

Other places it can plug in

Raspberry Pi (Klipper)

Many setups already use a small computer to run the printer. A check could sit on that box before the file reaches the printer board, last mile before print.

Cloud + networked queues

If jobs go through an app, server, or LAN before the printer, the same idea applies: inspect while it's still digital.

Resin, SLA + industrial

Same pattern: attach where the file already flows; keep image and policy work off the device that moves axes or resin.

Demo UI

Classification output

- Label, confidence, short summary, model reasoning

- Six orthographic renders (front, back, left, right, top, bottom)

Dashboard

- Policy verdict (Restricted / Accepted / Review), narrative, status line

- Risk index and confidence bars

- Alternate labels, per-view weights, pipeline timings

Extra information

2026 hardware mandates

New York Governor Kathy Hochul and Manhattan DA Alvin Bragg are pushing mandates requiring 3D printers to include built-in software to block ghost gun production. Hard engineering controls reduce machinery accidents by over 70%; this project is one small slice (mesh classification), not a product claim.

Intellectual property

IP theft costs the industrial sector hundreds of billions annually. Same class of problem, accountability for what gets printed, shows up outside guns; not the focus here.

Results

Markey-v1 Evaluations

Markey is an 0.6B embedding model that was trained on a labeled dataset of gun and non-gun 3D-printable meshes in G-code. You can find the dataset here on Huggingface.

99.3%

Val accuracy

0

False negatives

400

Test samples

~8

Epochs to converge

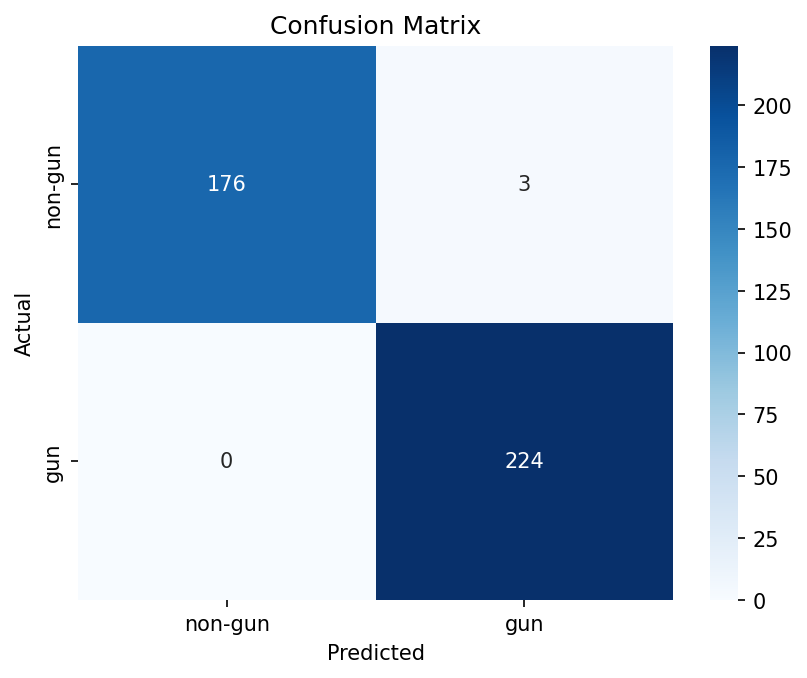

Confusion matrix

Binary classification on the held-out test set. The model never missed an actual firearm part, the failure mode that matters most for a safety gate.

- True negatives

- 176

- True positives

- 224

- False positives

- 3

- False negatives

- 0

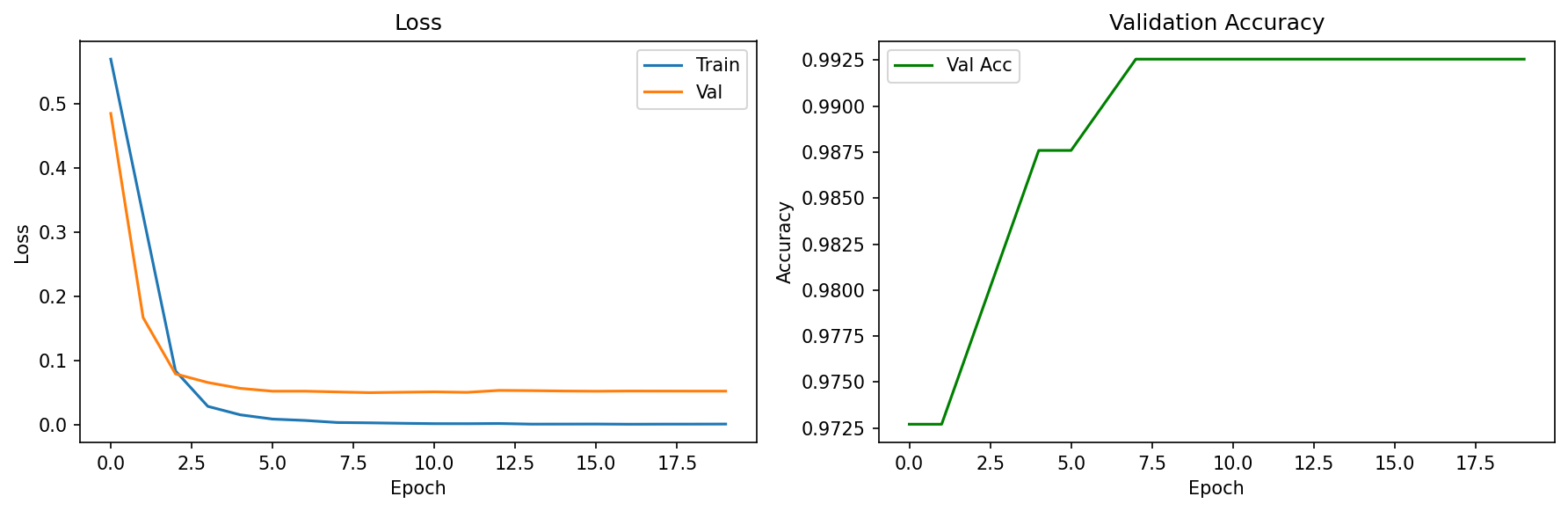

Training curves

Loss converges within the first few epochs with no divergence between train and validation. Accuracy reaches 99.3% by epoch 8 and holds flat. The model learns the boundary quickly and does not overfit.

- Final train loss

- < 0.01

- Final val loss

- ~0.05

- Peak val accuracy

- 99.3%

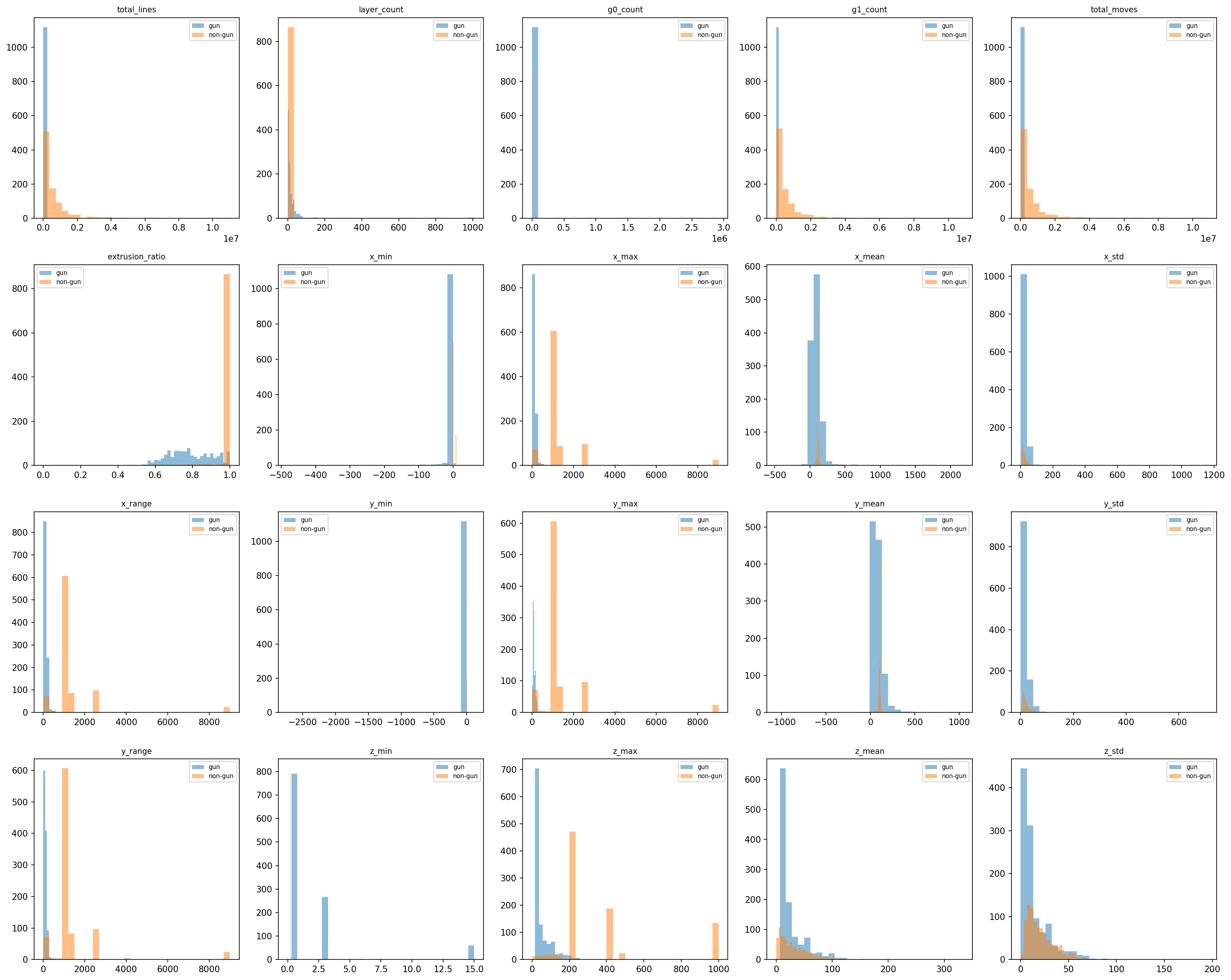

Feature distributions

Per-feature histograms split by class (gun vs. non-gun). Several G-code-derived features, particularly movement counts, coordinate ranges, and extrusion ratios, show clear separation between classes, confirming the classifier relies on structurally grounded signal rather than noise.

FAQ

Frequently Asked Questions:

Why analyze raw G-code instead of just rendering the 3D model?

Can't general AI models (like ChatGPT or Claude) just read the G-code?

No. Frontier language models are built for sequential human language, not millions of lines of spatial coordinates. Standard 3D prints generate massive G-code files that quickly exceed the memory limits (context windows) of general AI. Even when they can ingest the file, they suffer from the "Lost in the Middle" phenomenon, failing to find specific patterns in highly repetitive data. Our model is purpose-built using localized feature extraction to natively understand 3D spatial sequences.

In our testing, all frontier models such as gpt-5.4-xhigh, opus-4.6-high, and gemini-3.1-pro hallucinated and outputted the wrong answer on what the G-code might be printing out.